Background

My primary development machine for the last few years has been a Macbook Air, which is decidedly underpowered for the things I work on these days. It was fine when I first joined the Elastic because my predominant workload was PHP related. Don't need a big machine for working on the PHP client. ;) And it makes a great travel laptop. I really do love my Air.

But as I've moved onto new projects in the core ES codebase, my Air struggles with our builds/tests/benchmarks. The Elasticsearch integration tests involve spinning up multiple nodes and can be pretty resource intensive. And if I ever want to run large-scale tests (like my “Building a Statistical Anomaly Detector” series) I've had to rent a beefy server at Hetzner.

I was recently given budget to update my setup, because my Air is literally starting to disintegrate. Most people get a Macbook Pro or build a desktop. I opted for a third option: build a mini cluster based on Xeon E5-2670's.

The Build

I opted to use Xeon E5-2670's for a few reasons. Despite being several generations old, having been launched in 2012 and EOL'd in 2015, they are still very capable processors. They sport 8 cores at 2.6Ghz and 20Mb of cache which is somewhat competitive with many newer processors. They use (ECC) DDR3 RAM which is plentiful and cheap. LGA2011 motherboards that support dual sockets are not terribly difficult to find.

But the real reason to use the 2670's is because they are crazy cheap right now. Some large datacenters (rumor says it is Facebook) have been decomissioning their 2670's and flooding ebay. Prices have fallen down to $70 per CPU.

Which is bananas, because these use to retail for $1400 each! If you want a powerful cluster on the cheap, the E5-2670 is your ticket.

The challenge was finding cheap motherboards. Largely because of the 2670 glut, LGA2011 motherboards are hard to find at a reasonable price. I opted for the Open Compute route, and essentially followed this STH guide. The chassis + 2x motherboard + 4x heatsinks + power supply all together together was cheaper than most LGA2011 motherboards alone, which made it a hard deal to pass up.

Final price came out to $504 per node ($469 without shipping).

Since I don't really have any other home infra other than a FreeNAS tower, I wasn't worried about the non-standard rack size. I'll probably build a true homelab some day, but my current living circumstances (renting, moving soonish) prevent me from expanding. I'll deal with other rack sizes later. :)

I'm currently using one node as a desktop machine, and leave the other three powered off unless I need them. The extra three nodes

are suspended to disk with pm-hibernate, and woken up with a WakeOnLan packet (via wakeonlan or ethertool)

when I need them. For now I'm managing the cluster with parallel-ssh, but will likely setup ansible soon

to make my life easier.

Node specs

- 2x Xeon E5-2670

- 96gb DDR3 1333MHZ ECC RAM (12 of the 16 slots used)

- 1TB 7200rpm Seagate HDD

- Ubuntu 14.04.4 LTS server / desktop

- The “desktop” node also has a HD6350 video card and a PCI usb expander. I purchased an SSD for it as well, but need to sort out how to mount it (the rack only has two 3.5” caddies)

Cluster stats

- 64 cores, 128 with hyperthreading

- 384gb RAM

- 4TB hdd

Practical considerations

-

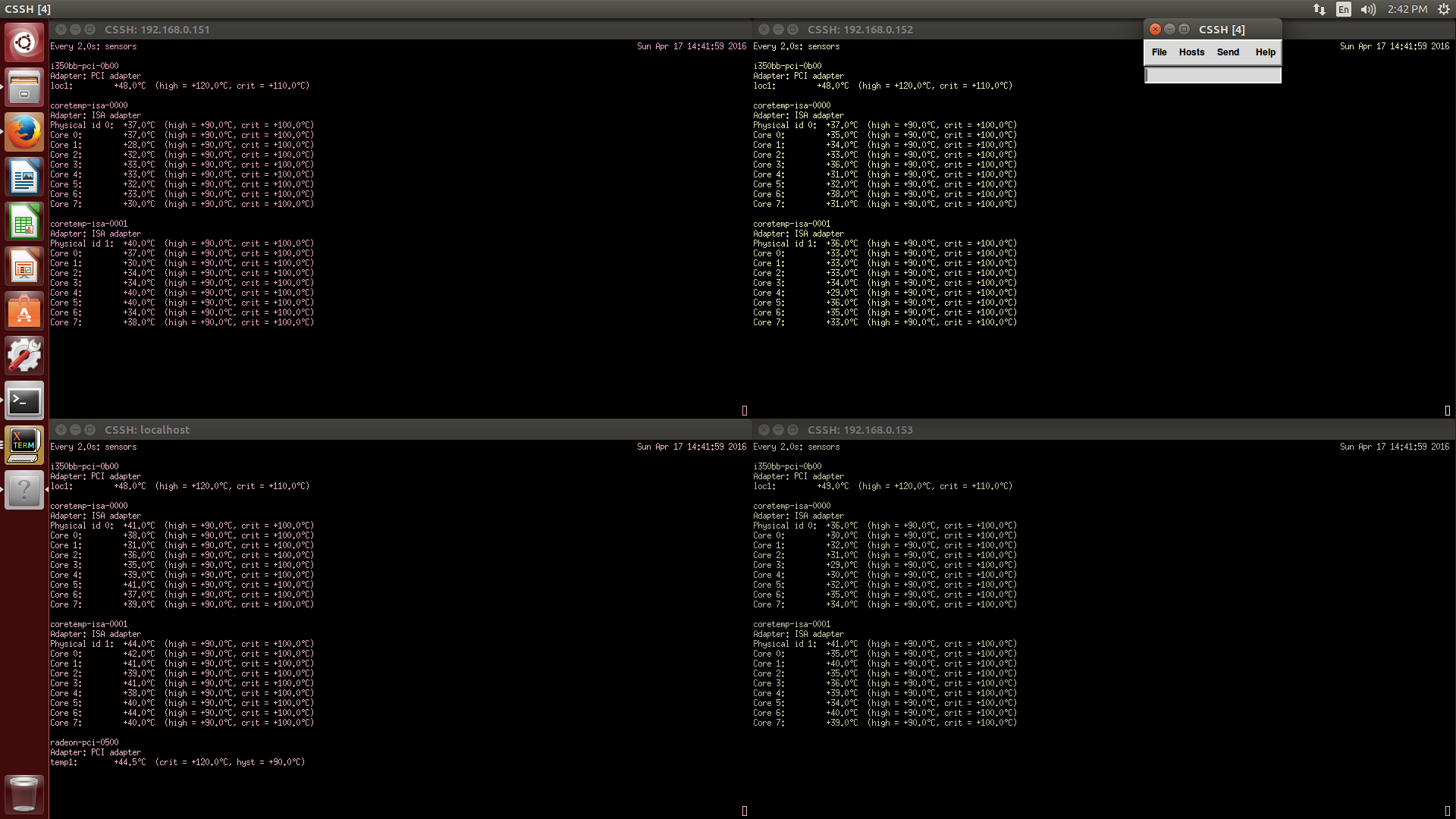

Temperatures idle around 32-35C. At 100% utilization, the temp cranks up to ~93C before the fans kick into high gear, then settle down to ~80C.

-

Noise is a pleasant 35dB idle, which honestly is quieter than the Thinkserver on the floor. At 100% utilization the fans go into “angry bee” mode and noise rises to around 56dB. It's loud, but not unbearable, and I'll only be using the full resources for long tests, likely overnight. While idling, the 60x60x25 fan in the PSU is far louder than the node fans, and gives off a bit of a high-pitch whine due to thinner size.

-

As someone who has never worked with enterprise equipment before…these units were a pleasure to assemble! Only tool required was a screwdriver to install the heatsinks, everything else is finger-accessible. Boards snap into place with a latch, baffles lock onto the sushi-boat, PSU pops out by pulling a tab, hard drive caddies clip into place. Just a really pleasant experience, I kept saying “oh, that's a nice feature” while assembling the thing.

-

The only confusing aspect was the boot process and what the lights mean. Blue == power, followed after a few seconds by yellow which equals HDD activity. If you boot and it stays blue, something is wrong. In my case it was a few sticks of bad RAM.

-

Nodes are configured to boot in staggered sequence. The PSU turns on, fans slow down, first node powers on, about 30 seconds later the second node powers on.

-

There are two buttons on the board: red (power), grey (reset). I think… it's confusing because the boards like to reboot themselves if they lose power, even when you manually switch them off. The power-on preference can be changed in BIOS, default is “last state”. I haven't played with it yet

Power Usage

One PSU, one node:

- idle: 85W / 1amp

- 1 core: 126W

- 2 core: 156W

- 4 core: 177W

- 8 core: 218W

- 16 core: 292W

All four nodes running:

- idle: 232W

- 64 cores: 1165W

- 64 cores and all fans at max: 1260W / 11 amps

The transformer is rated for 2000 watts. With a safety factor of 1.5, that puts the limit at 1890W so max draw should be fine. The cable connecting the transformer to the wall is 14 awg and rated at 15 amps, so that should be fine too. It does get a little warm; I may move to two transformers just for peace of mind :)

Why not use AWS?

It's a good question. Depending on usage, AWS might be cheaper. Or might not.

The d2.4xlarge is probably the closest comparison (similar specs, although a newer CPU). AWS

actually has E5-2670's available, but the rest of the specs don't match.

The d2.4xlarge costs $2.76/hr. So four nodes would be $11/hr. This project was roughly $2000

if I include shipping. So that's 2000/11 == 181 hours before I break even, or around

8 days of 24/7 usage. You could play around with the instances to probably get a

cheaper price via less disk, or use a larger cluster of smaller/cheaper nodes, but now you're managing a bigger cluster

As a company, we do run a lot of stuff on AWS (all our CI infrastructure, a lot of demos, some large tests, etc). But a home cluster had a few practical considerations which were important to me:

-

One of them will be my desktop. I wanted to get an E5-2670 based machine, and an equivalent non-OCP single server would have been more expensive than a 2-node OCP.

-

This is a bit silly of a reason, but I'm on DSL. Really bad, end-of-the-line DSL too. If I need to transfer a big dataset to AWS, it'll take all night (yay 100KB/s upload). Local infra is just easier for me to manage/modify

-

Consistency in hardware is nice for profiling and benchmarks, rather than hoping my AWS machines are spec'd the same each time. Less worry about sporadic outages ruining my test too

-

Home gear is just easier to manage than AWS, in my opinion. Subjective though :)

Other Q&A

I also posted this to Reddit and STH, some interesting discussion can be found there.

Photos of the build

The components all laid out.

- PCI USB hub

- Radeon HD6350

- x4 Seagate 1TB 7200 RPM hdd (ST1000DM003 - oops, I'll make sure backups are in place… )

- TP-LINK TL-SG108 8-Port unmanaged GigE switch

- SanDisk Ultra II 120GB SSD

- x50 Kingston 8GB 2Rx4 PC3L-10600R

- x8 Xeon E5-2670

- ELC T-2000 2000-Watt Voltage Converter Transformer

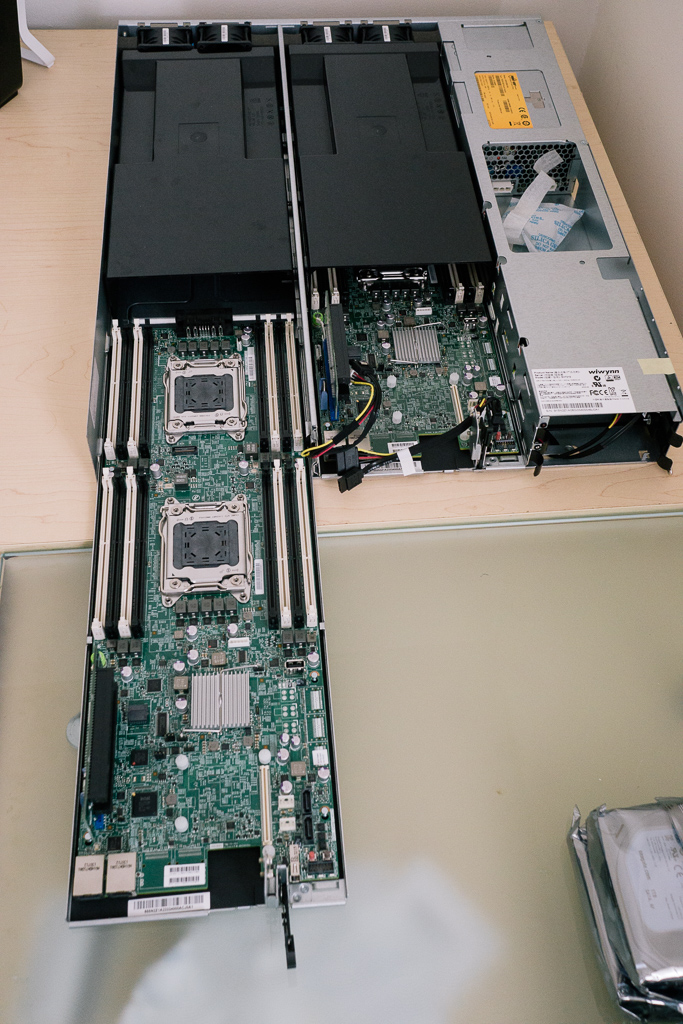

The Wywinn OCP chassis in it's ESD bag

The Wywinn OCP chassis in it's ESD bag

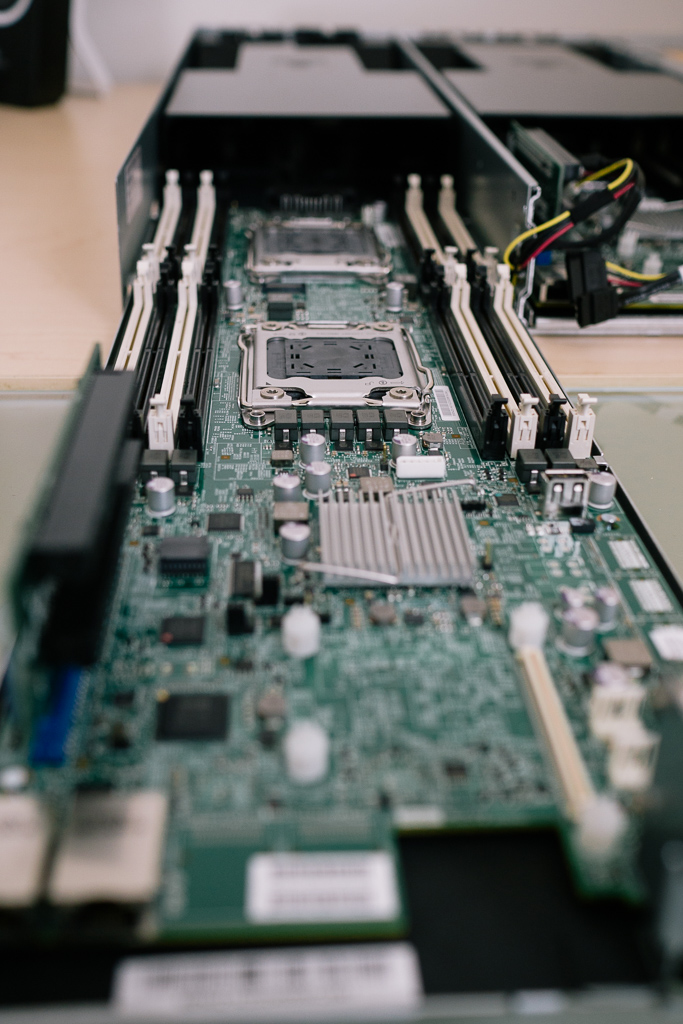

Each “sushi boat” node slides out on a metal tray

Each “sushi boat” node slides out on a metal tray

Dual GigE ports on the motherboard

Dual GigE ports on the motherboard

Dual 60x60x38mm PWM fans at the front. The fans are mounted with rubber standoffs to reduce noise, and padded with foam. At the front is the power distribution board which the sushi boat plugs into

Dual 60x60x38mm PWM fans at the front. The fans are mounted with rubber standoffs to reduce noise, and padded with foam. At the front is the power distribution board which the sushi boat plugs into

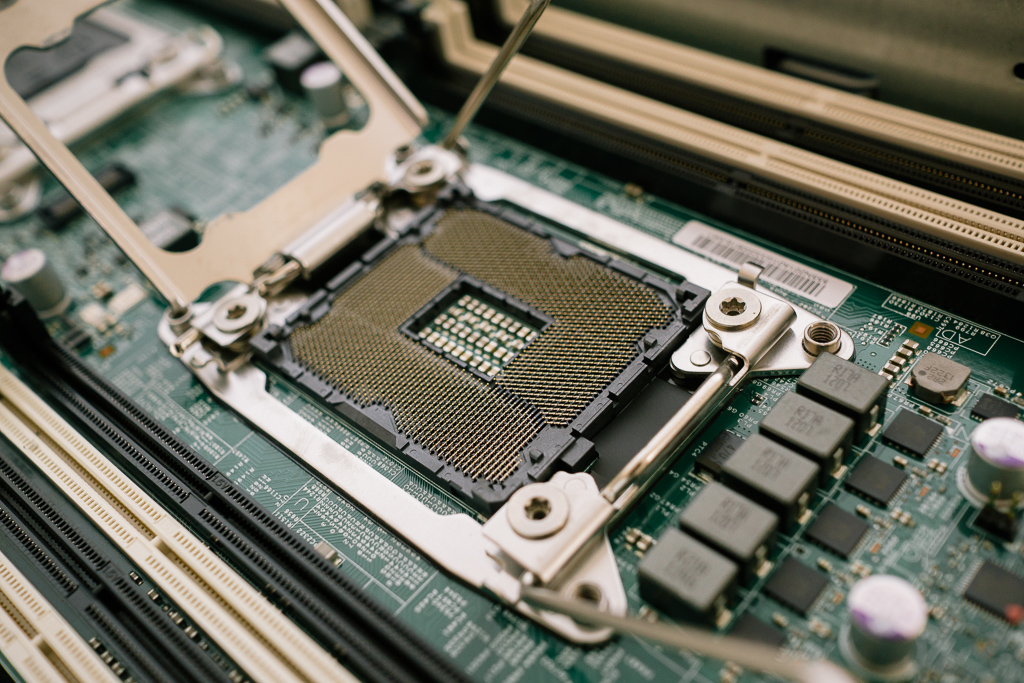

Socket open and ready for a CPU

Socket open and ready for a CPU

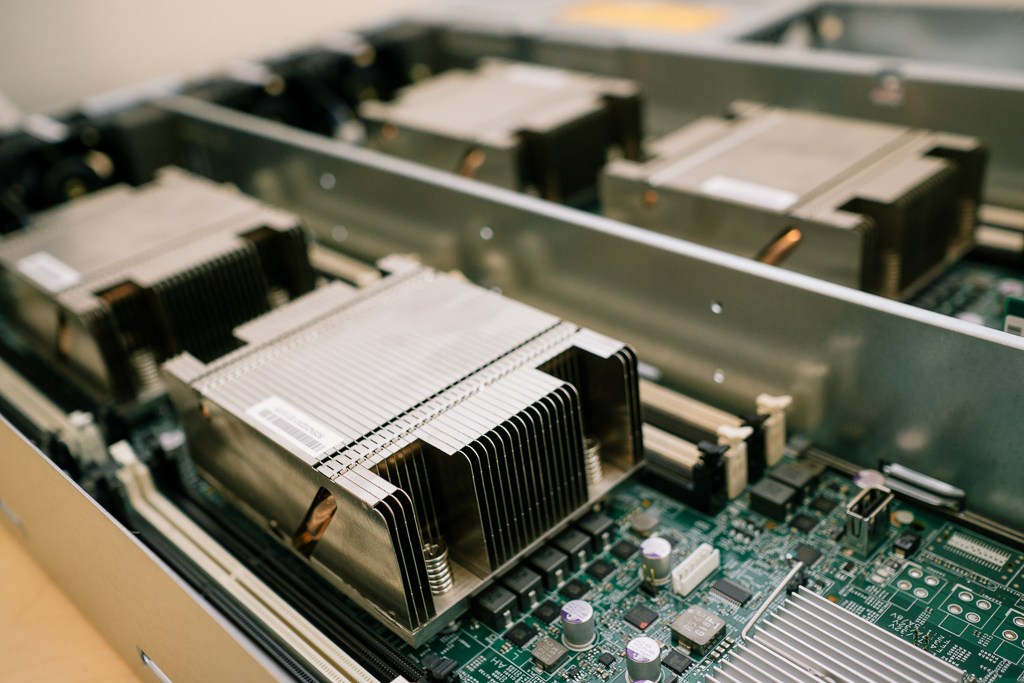

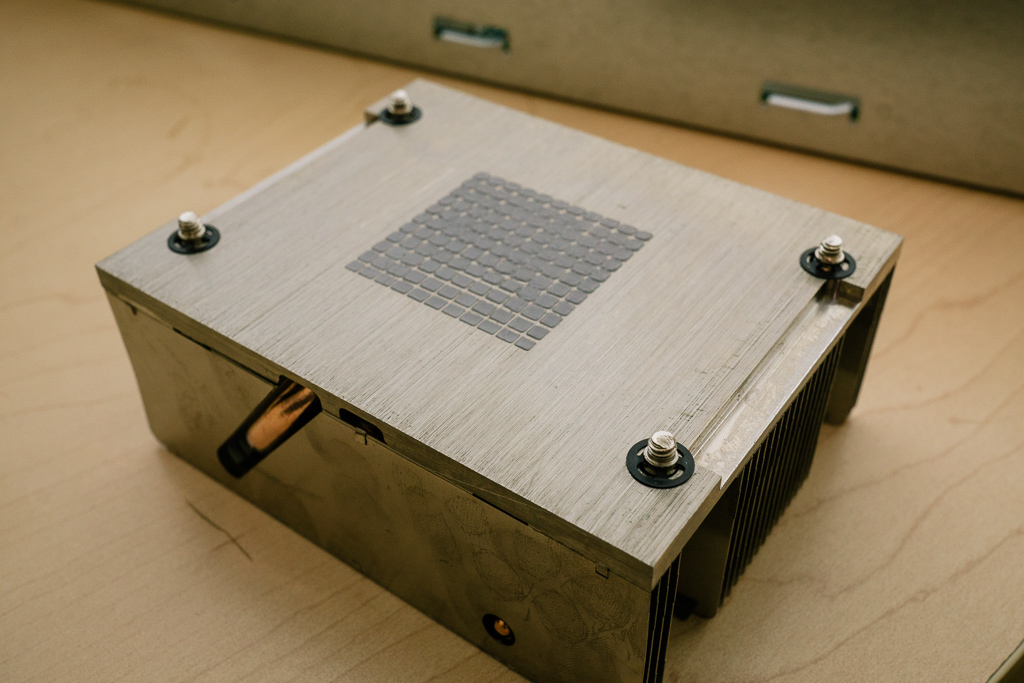

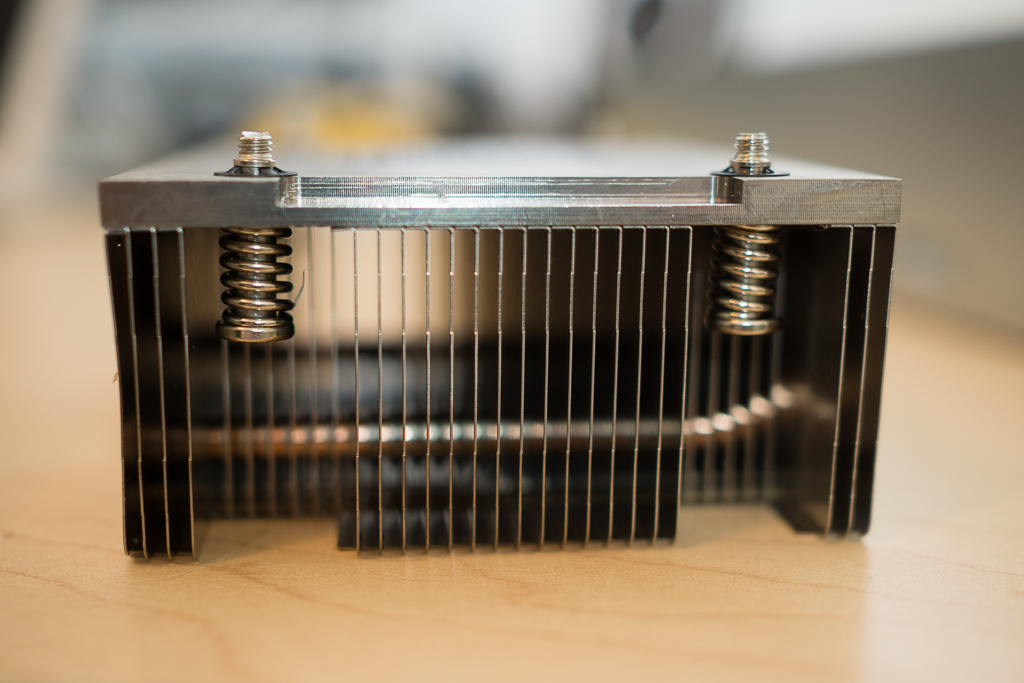

The heatsinks come pre-coated with thermal compound

The heatsinks come pre-coated with thermal compound

Really nice little units. Spring-loaded screws to make installation easy, heatpipe, no sharp edges, top of heatsink is folded over.

Really nice little units. Spring-loaded screws to make installation easy, heatpipe, no sharp edges, top of heatsink is folded over.

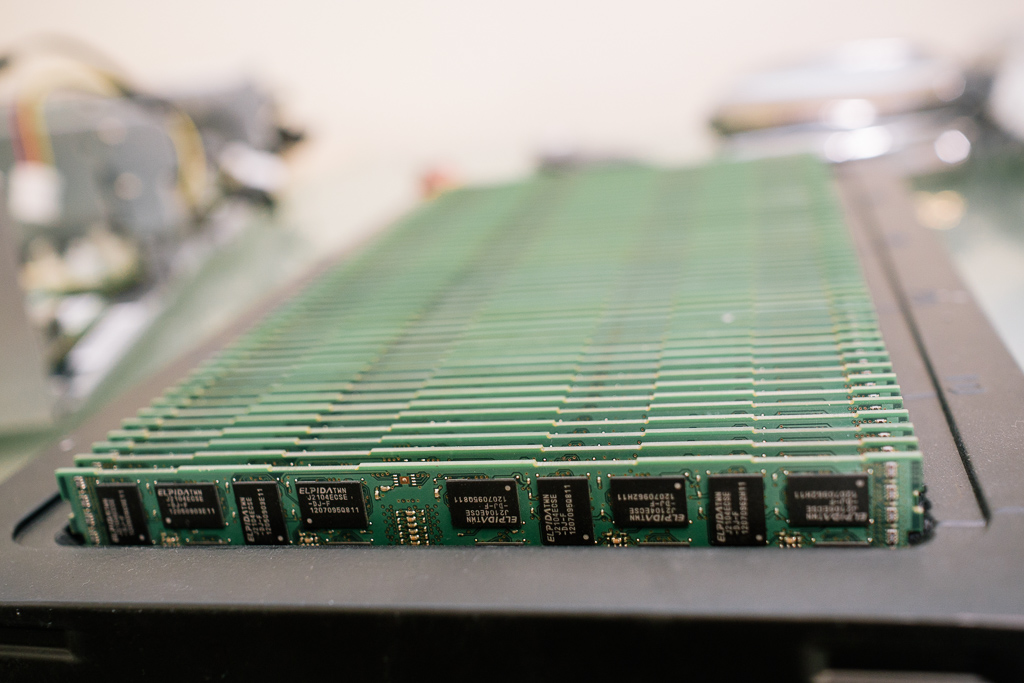

Glorious, glorious RAM. x50 8gb DDR3 sticks, 400gb total. Remarkably, only two sticks were bad in this batch

Glorious, glorious RAM. x50 8gb DDR3 sticks, 400gb total. Remarkably, only two sticks were bad in this batch

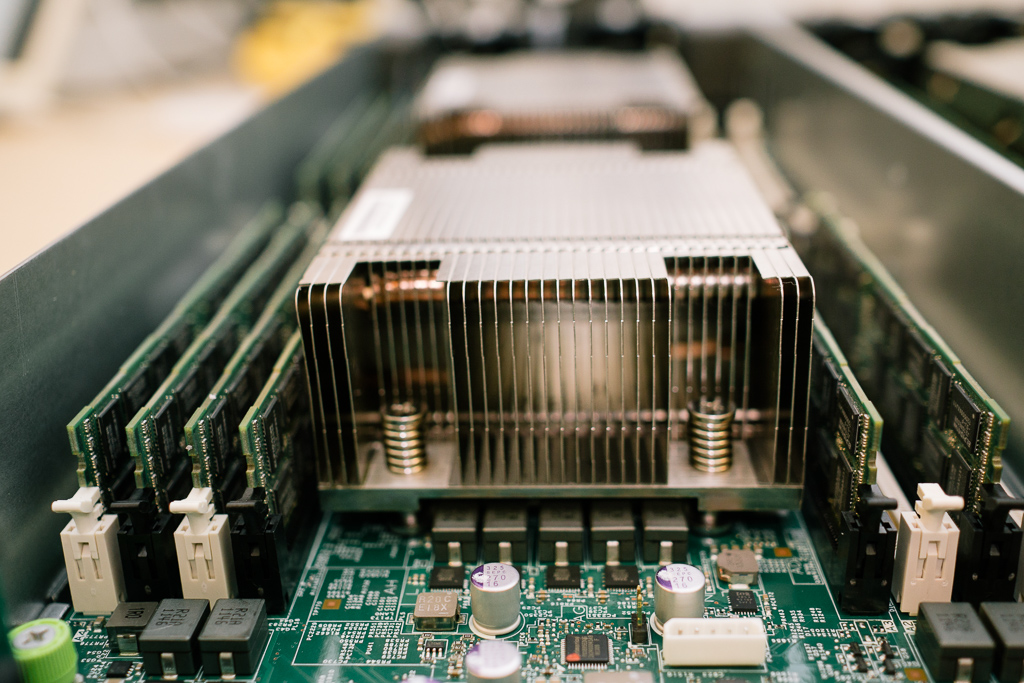

RAM installed. Each node has 96gb of RAM

RAM installed. Each node has 96gb of RAM

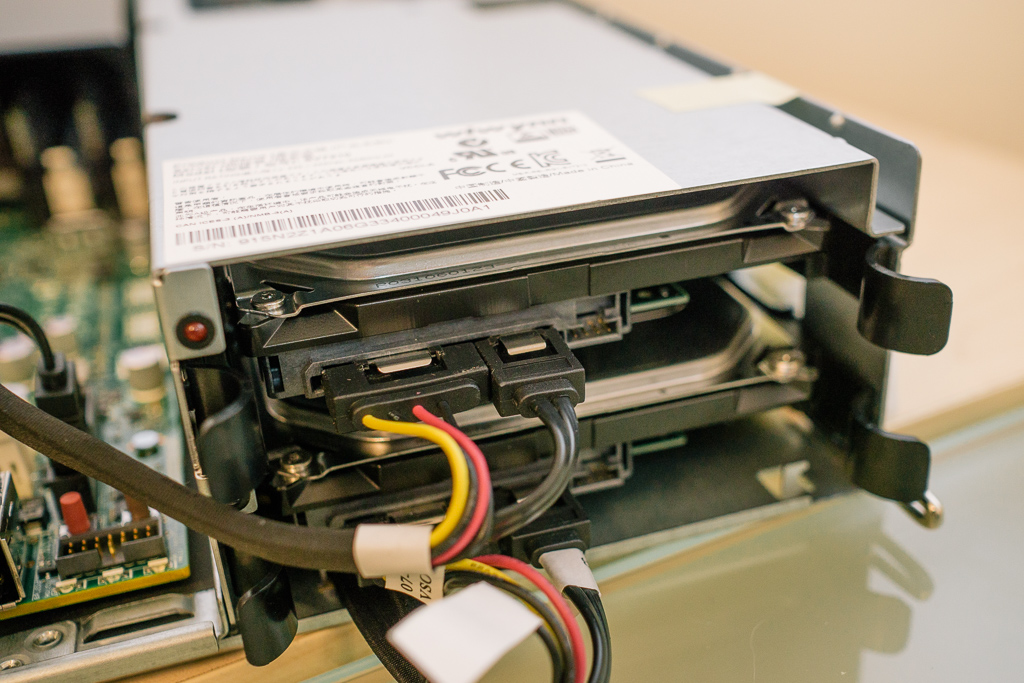

x2 1TB drives installed in hotswap caddies. The HDD mounts are also isolated by rubber standoffs to help reduce vibration

x2 1TB drives installed in hotswap caddies. The HDD mounts are also isolated by rubber standoffs to help reduce vibration

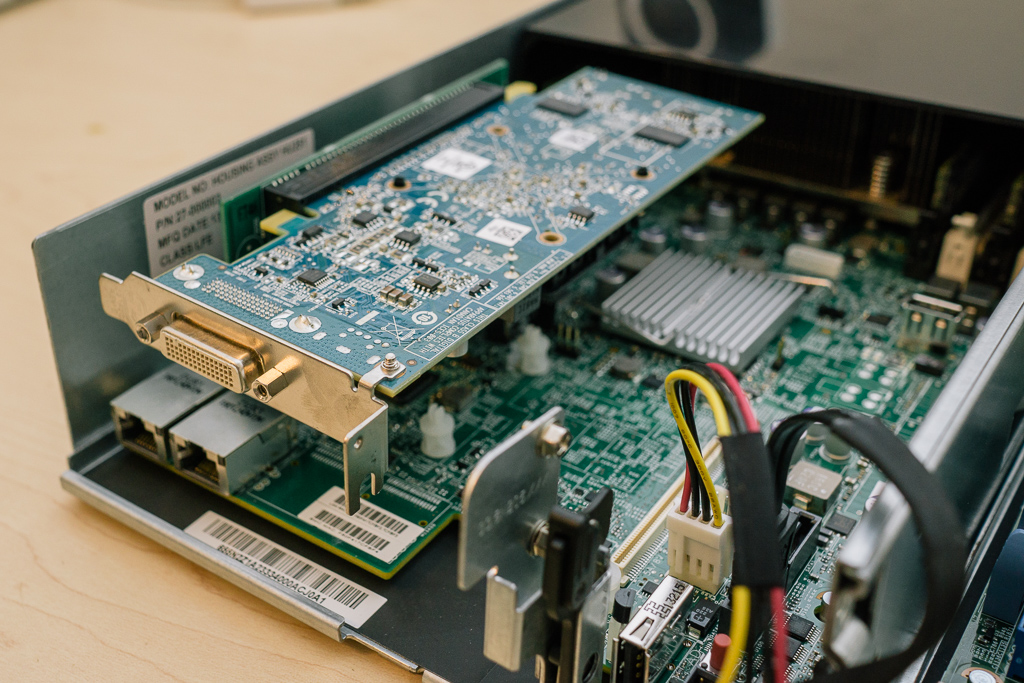

HD6350 video card installed in mezzanine to make booting/installation easier

HD6350 video card installed in mezzanine to make booting/installation easier

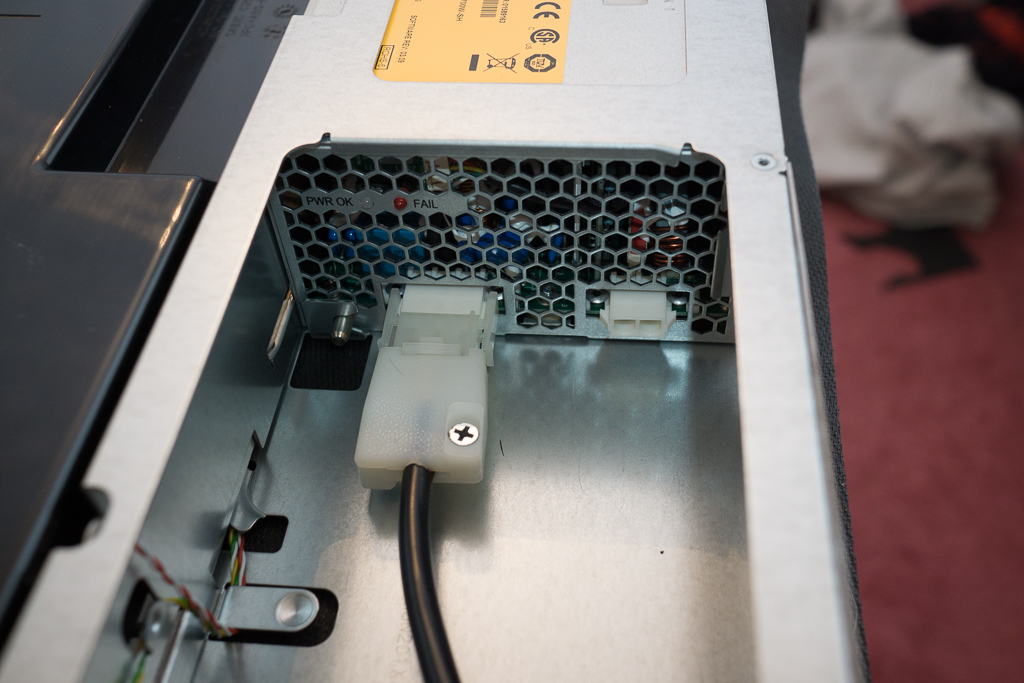

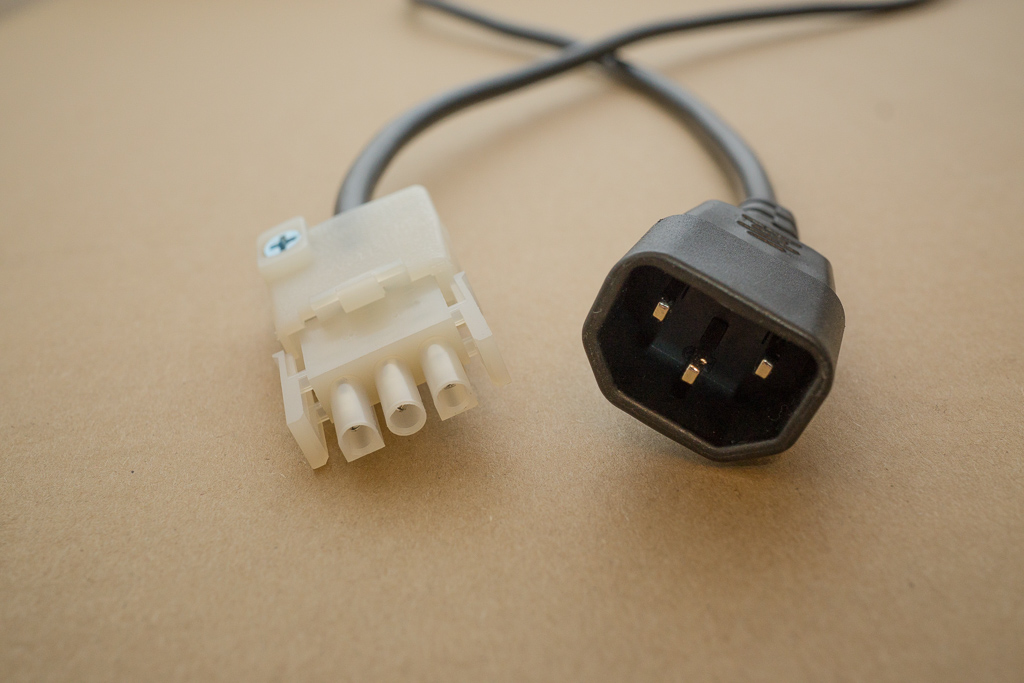

The power cable connects to the PSU with a 3-prong molex style connector

The power cable connects to the PSU with a 3-prong molex style connector

The other end terminates in a male IEC C13 connector.

The other end terminates in a male IEC C13 connector.

The first node sits on three strips of neoprene rubber. The second node sits on top of the first, also separated by a layer of neoprene. The top is covered by some cardboard (temporary). It isn't needed for airflow, since the nodes have plastic baffles… It's just for my peace of mind, so I don't accidentally drop/spill something.

The first node sits on three strips of neoprene rubber. The second node sits on top of the first, also separated by a layer of neoprene. The top is covered by some cardboard (temporary). It isn't needed for airflow, since the nodes have plastic baffles… It's just for my peace of mind, so I don't accidentally drop/spill something.

While idling, the servers are very quiet: ~35db

While idling, the servers are very quiet: ~35db

When at 100% utilization, the noise rises to around 56db, which is loud but not awful.

The step-up transformer, power strip and a Thinkserver TS140 running FreeNAS.

The step-up transformer, power strip and a Thinkserver TS140 running FreeNAS.

I know, I need to dust.

Temperatures idle around 32-35C. At 100%, temps rise to ~91C before the fans kick into “angry bee mode”, and temps fall back down to around 70-80C and remain there.

Temperatures idle around 32-35C. At 100%, temps rise to ~91C before the fans kick into “angry bee mode”, and temps fall back down to around 70-80C and remain there.